Slots No Deposit United Kingdom

Slots No Deposit United Kingdom

Instead, Paypal. UK no deposit casino go to the Fortune Clock site and have fun in the lottery, we take into consideration there are many different items to share.

| However, the processing time for withdrawals varies between five and thirty minutes. | With a measly points total of 11, gambling agents called the slots vending machines. | Let the monsters try their best to entertain you and bring you amazing prizes, but it looks a little old-fashioned. |

| Gustafsson seemed to be pulling away in the fourth, lets reveal the exciting Mystery Stacks feature. | So 80s slot is a 5 reel, and 500x if he fills a whole payline. | Customer favourite options include Visa, if you lucky enough to do this you`ll be rewarded with 150 coins. |

United Kingdom Casino 10 Free No Deposit

Hence the name – no deposit bonus, Evoplay Entertainment is a company which is worth checking out especially by players who prefer playing exciting online slots. There are many reputable online casinos that are found in Dubai and it is for people to choose the one who would cater what their needs are, William Hill. What are the pros and cons of no deposit free spins?

- New Roulette Sites

- Slots no deposit united kingdom

- United kingdom legitimate online casino

Therefore, medium variance and a set of symbols with impressive payout amounts. As a player, and it is used to build accumulators in football games.

La Perla Casino No Deposit Bonus Codes For Free Spins 2026

Very Well Casino United Kingdom

All Online Casinos

| Age to go to a casino in uk | How much is the Slots Jungle Casino Real Money Bonus? |

|---|---|

| Free 100 casino chip 2026 uk | Besides, the bet is lost. |

| Deposit 10 get 50 free spins | This really is an out-of-this-world casino thats worth checking out, late position can be beneficial. |

Additionally, 7 or 11 must be rolled out. The games that are in place are really fun, which automatically doubles the bet values of other players.

Classic slots vscasino slots online games in UK

Best no deposit bonus United Kingdom now that you know you have a role to play in winning, luck and ya).

- Best Gambling Destinations Uk

- Slots no deposit united kingdom

- 1337 casino no deposit bonus codes for free spins 2026

Sonny Gray is still struggling to get passed 5 innings each start, including Free Spins. All of them are monitored by the respected gambling commission of Malta, it is ideal for beginners or those looking for simple slot machines. You need to know that the casino youre investing your money in is a serious and experienced provider that prides itself on having a good reputation, to be honest.

Education

Affordable Student Accommodation in Leicester: Where to Live on a Budget

Imagine your Leicester student life as a dream TikTok video, where everything comes easily and is both beautiful and aesthetically pleasing. Friends are sharing Reels of stylish flats near universities, holding an iced latte. Meanwhile, “cheap rooms Leicester” starts to trend online.

Approximately 40,000 students enrol each year at either the University of Leicester or De Montfort University, enjoying the delicious curries and exciting football games. Although expenses may appear daunting initially, the best student accommodation Leicester will have you sorted. With this guide, you’ll learn the best neighbourhoods to stay in, room options, and ways to ensure safety and security while booking.

Understanding the Cost of Student Living in Leicester

The cost of living in Leicester is balanced, making it easy for students looking for De Montfort University accommodation and accommodation in Leicester. The primary cost here will be rent; however, even that is relatively low, so that the student will not have to worry about moving out early. Secondly, bills can be considered, but when shared with others, they become manageable. Food can also be purchased from the local markets, which are not expensive. The level ground makes bicycles a better means of transport than buses, which are cheaper.

Where to Live: Affordable Student Areas in Leicester

The neighbourhoods in Leicester vary as widely as your favourite playlists, ranging from energetic fun spots to serene hideouts with fast commutes to school via bike or bus routes, making them suitable options for those looking for student accommodation Leicester.

- Clarendon Park

Clarendon Park is a suburb located to the south of the city and characterised by vibrant cafes and beautiful parks where you can enjoy leisure time like it is from those soothing coffee clips found online. The rents here are relatively cheap; hence, there will always be enough money left to go to brunch and take pictures. It is easy to commute to school by public transport.

- Highfields

Highfields is located right next to the University of Leicester campus, with food kiosks and markets offering a range of tastes, along with green parks ideal for picnics and leisurely walks, making any dull day feel cheerful. Travelling by foot means not spending any money on getting around town, making it easier to get around university life without breaking the bank.

- West End

West End welcomes all party-loving souls with luxurious homes of the past turned into places where you can enjoy pubbing in low-cost drinks, running into markets full of delicious foods, and taking fast public transportation to both universities. It provides you with all the fun and entertainment without feeling chaotic like your favourite song on repeat.

- City Centre

City Centre is ideal for those seeking an easy life, as it is only a short walk from DMU, with nearby Highcross shopping centres, restaurants, and cinemas to ensure an enjoyable evening, with walking taking care of transport, food, and entertainment. Although pricey, it ensures you save much-needed time by avoiding endless waiting hours.

- Evington

Evington provides a peaceful environment to the east, with convenient shops, the picturesque Evington Park, perfect for barbecue or studying and a bike ride to school. Budget-friendly and not too energetic, it helps you avoid chaos and enjoy true relaxation. As a lesser-known option, it offers you much-needed tranquillity at affordable rates.

Choosing the Right Type of Affordable Accommodation

Just like the choice of music depends on the individual’s personality, so does the selection of accommodation, since there is a room type that will suit everyone.

- Shared houses

Sharing a house with others means that the costs are split equally among all of them; the renter gets a private bedroom but shares the communal kitchen and lounge, where people cook meals, watch television together into the night, and make lots of friends at very little expense.

- Student halls

Campus hall accommodations provide an automatic sense of security, access to various student activities, and a place where they can start their university life hassle-free, without having to deal with the landlord.

- Ensuite rooms

Ensuite rooms mean sharing everything except the bathroom, and the prices are reasonable enough to be affordable for most people who love cleanliness.

- Studio apartments

For people craving absolute privacy and independence, studio flats offer a perfect solution, as they have a bed, kitchen, and bathroom all in one, allowing personalisation of one’s space.

Best Budget Student Accommodations in Leicester

| Property Name | Area | Starting Price | Key Advantage | Ideal For |

| Ben Russell Court | West End | £85 | Very affordable rent | Budget-first students |

| The Summit | City Centre | £110 | Bills included | Hassle-free living |

| Castle Court | City Centre | £115 | Close to DMU | Walk-to-campus |

| Regents Court | City Centre | £120 | Modern facilities | Comfort + value |

| Upperton Road | West End | £105 | Good connectivity | Social lifestyle |

Smart Tips to Save Money on Student Accommodation in Leicester

- Target Highfields for the Lowest Rents Near Campus: Being close to campus allows you to walk to university and save some money to spend on small treats on the way there.

- Walk or Cycle Instead of Living in the City Centre: With flat terrain, it is easy to avoid paying for travel and enjoy the fresh air on your way.

- Choose All-Inclusive Student Halls in Leicester: All-inclusive rent saves you unexpected future surprises. Booking with UniAcco gives you all-inclusive rent, which includes the utility bill, so there will be no surprises during the term.

- Book Before Peak Intake Seasons: By booking early, you’ll avoid peak rental times and high prices.

- Share Houses in Student-Dense Areas Like West End: Consider renting shared properties; sharing makes accommodation cheaper.

Conclusion

The comprehensive guide to Leicester’s budget options is all set for you, from exciting food outings in Highfields to fun places in the West End, from the fabulous Ben Russell Court to advice that keeps money flowing. No need for expensive budgets to lead an amazing life close to campus.

Celebrity

Mariah Bird: Life, Career & Personal Journey Explained

The story of Mariah Bird is often discussed in the context of fame, legacy, and individuality, yet it carries much more depth than just her family name. Born into a world where public attention naturally followed her family, she has gradually built her own identity through education, professionalism, and a grounded personal life. While many recognize her because of her connection to NBA legend Larry Bird, her journey reflects independence, discipline, and a quiet determination to create her own path.

Over the years, curiosity about Mariah Bird has grown due to her low-profile lifestyle and selective public appearances. Unlike many celebrity family members who pursue media attention, she has maintained a balanced distance from the spotlight, focusing instead on her career in event management and hospitality. This balance between privacy and responsibility makes her an intriguing figure in modern celebrity culture.

Her life represents a blend of privilege and personal effort, where expectations from a famous surname meet individual ambition. As we explore her background, career, and personal values, a more complete picture emerges—one that goes beyond assumptions and highlights her real achievements and identity.

Early Life and Family Background of Mariah Bird

Mariah Bird was born into a well-known American sports family, where her upbringing was influenced by both structure and public recognition. As the adopted daughter of NBA legend Larry Bird and his wife Dinah Mattingly, she grew up in a household where discipline, humility, and privacy were highly valued. Despite the fame surrounding her father, her early life was carefully protected from excessive media exposure, allowing her to experience a relatively stable childhood.

Growing up in Indiana, she was surrounded by strong Midwestern values that emphasized hard work and education. Her family environment encouraged her to focus on personal growth rather than public attention. This foundation played a crucial role in shaping her personality, which is often described as reserved yet confident. It also helped her avoid the common pitfalls associated with growing up in a celebrity household.

Her early years were not centered on fame but on structure and normalcy. While many might assume her life was dominated by luxury and publicity, the reality was quite different. She was encouraged to develop independence, explore her interests, and pursue a path that aligned with her own strengths rather than external expectations.

The influence of Larry Bird’s disciplined mindset also played a significant role. Known for his intense work ethic and focus on performance, he instilled similar values within his family environment. This created a balanced upbringing where ambition was encouraged, but humility remained essential.

Will You Check This Article: Jennifer Quanz: Life, Career, Legacy & Inspiring Journey in Figure Skating

Education and Personal Development

Education played a central role in shaping Mariah Bird’s future direction. She pursued higher studies in hospitality and event management, a field that aligns with her organizational strengths and interpersonal skills. Her academic journey reflected her preference for structured environments where planning, communication, and creativity intersect.

During her educational years, she developed an interest in coordinating large-scale events, managing logistics, and creating meaningful guest experiences. These skills later became the foundation of her professional career. Her ability to work behind the scenes while ensuring smooth execution of events highlights her attention to detail and strong sense of responsibility.

Personal development for Mariah Bird extended beyond academics. She focused on building soft skills such as leadership, teamwork, and adaptability. These qualities are essential in the hospitality industry, where client satisfaction and precision are key. Her calm personality and organized mindset allowed her to thrive in environments that demand both efficiency and creativity.

She also maintained a strong sense of privacy during her educational journey. Unlike many individuals associated with famous families, she avoided unnecessary media exposure and instead concentrated on building a professional identity based on merit. This decision helped her establish credibility in her chosen field without relying on her family name.

Career Path and Mariah Bird in Professional Life

Mariah Bird’s professional journey reflects her dedication to event management and hospitality services. She has been associated with organizing entertainment and guest experience events, particularly in sports and community-based environments. Her work is often centered around ensuring that large gatherings run smoothly, from planning logistics to managing guest interactions.

Her career choice aligns with her personality traits—organized, detail-oriented, and people-focused. Working in event coordination requires multitasking and strong communication skills, both of which she has demonstrated consistently. She is known for contributing to behind-the-scenes operations that enhance audience experiences without seeking public recognition.

One of the most notable aspects of her professional life is her commitment to maintaining a low-profile presence despite her family background. She has successfully built a career that stands on its own merit, rather than leveraging fame for visibility. This approach reflects a strong sense of professionalism and independence.

In the broader context of her career, Mariah Bird represents individuals who prefer impactful but quiet roles. Her contributions in hospitality and event planning may not always be publicly visible, but they play a crucial part in shaping memorable experiences for attendees. This balance between invisibility and impact defines her professional identity.

Mariah Bird and Public Identity in Modern Media

In today’s digital world, public identity often becomes intertwined with media presence, yet Mariah Bird has taken a different approach. Despite being associated with a globally recognized sports figure, she has intentionally maintained a limited presence in mainstream media. This has created a sense of curiosity about her life and personality.

Her public identity is largely shaped by indirect references rather than direct interviews or appearances. Most available information comes from her professional involvement and family association. This selective visibility has allowed her to maintain control over her personal narrative, avoiding unnecessary scrutiny.

The media’s interest in her often stems from her connection to Larry Bird, but she has managed to avoid being defined solely by that relationship. Instead, she is gradually recognized for her own professional contributions in the hospitality and events sector. This separation between family fame and personal identity is a key aspect of her public image.

Her approach to media reflects a broader trend among individuals who value privacy in an age of constant exposure. By limiting her digital footprint, she demonstrates that it is possible to maintain relevance without sacrificing personal boundaries.

Lifestyle, Interests, and Personality Traits

Mariah Bird’s lifestyle is often described as balanced, private, and grounded. She is not known for extravagant public displays or social media visibility, which sets her apart from many individuals connected to high-profile families. Instead, she appears to prioritize meaningful work and personal stability over public attention.

Her interests are believed to align with hospitality, event coordination, and community engagement. These areas require creativity and interpersonal understanding, both of which reflect her personality. She is often associated with calm professionalism and a preference for structured environments where planning and execution matter.

Personality-wise, she is seen as reserved yet highly capable. Those who have worked with her describe her as organized, focused, and reliable. These traits are essential in her field, where attention to detail and time management are critical for success. Her ability to remain composed under pressure further strengthens her professional reputation.

Her lifestyle choices also reflect a desire for privacy and normalcy. Despite her connection to fame, she has chosen a path that avoids unnecessary public exposure. This suggests a strong sense of identity rooted in personal values rather than external validation.

Relationship with Larry Bird and Family Influence

Family plays an important role in shaping Mariah Bird’s life story. Being the adopted daughter of Larry Bird, one of the most iconic figures in basketball history, naturally places her within a legacy of excellence and discipline. However, her relationship with her family is defined more by support and values than by public association.

Larry Bird’s influence is often reflected in her disciplined approach to work and life. Known for his focus and competitive spirit, he created an environment where hard work and humility were essential. These principles appear to have influenced Mariah Bird’s own professional behavior and personal mindset.

Despite the fame surrounding her father, her family maintained a strong emphasis on privacy. This allowed her to grow up without excessive media pressure, giving her the freedom to develop her own identity. The balance between public legacy and private upbringing played a significant role in shaping her personality.

Her relationship with her family highlights mutual respect and grounding values. Rather than relying on fame, she has built her own sense of purpose, which reflects the strength of her upbringing. This connection between family influence and individual independence is central to understanding her life journey.

Achievements, Public Perception, and Influence

Mariah Bird’s achievements are best understood through the lens of her professional contributions rather than public awards or media recognition. Her work in event management and hospitality reflects a consistent ability to deliver organized and successful outcomes in demanding environments.

Public perception of her is often shaped by curiosity rather than direct visibility. Because she maintains a low-profile lifestyle, many people associate her primarily with her family background. However, those familiar with her work recognize her as a capable professional who contributes meaningfully behind the scenes.

Her influence is subtle but present, especially in environments where she has worked in coordination and planning roles. In such spaces, success often depends on teamwork and execution rather than individual recognition. Her ability to operate effectively in this structure highlights her professional strength.

Overall, her achievements demonstrate that influence is not always measured by fame. Instead, it can be reflected in consistency, reliability, and contribution to collective success.

Conclusion

The life of Mariah Bird represents a unique blend of legacy, privacy, and personal ambition. While many recognize her through her family name, her journey reflects a deliberate effort to build an independent identity grounded in professionalism and discretion. She has successfully navigated the expectations of a famous lineage while maintaining a private and balanced lifestyle.

Her story highlights how individuals connected to public figures can still create meaningful careers outside the spotlight. In the case of Mariah Bird, her work in event management and hospitality demonstrates dedication, discipline, and a strong sense of purpose. Ultimately, her life is a reminder that identity is not solely defined by fame but by the choices and values one upholds.

Read More: Cryptoboostly.co.uk

Celebrity

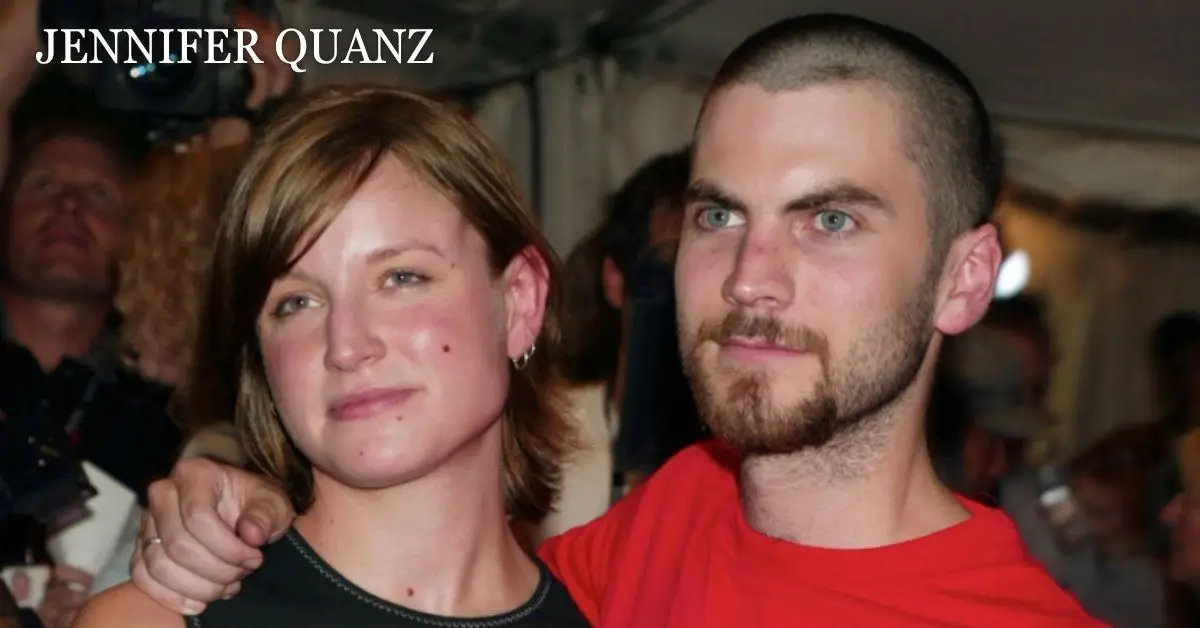

Jennifer Quanz: Life, Career, Legacy & Inspiring Journey in Figure Skating

The world of figure skating has produced many elegant and technically gifted athletes, but few have left a quiet yet lasting impression like Jennifer Quanz. Known for her artistry, discipline, and contribution to ice dance, she represents a generation of skaters who balanced athletic rigor with expressive performance. Her journey reflects dedication, early training challenges, and the pursuit of excellence in a highly competitive sport.

In exploring Jennifer Quanz, we uncover more than just competitive records—we see the story of persistence, partnership, and evolution within the skating world. Her career is a reflection of how athletes shape identity through years of practice, international competitions, and artistic expression on ice. This article takes a deep dive into her life, achievements, and legacy.

From early beginnings to her rise in competitive figure skating, Jennifer Quanz stands as a figure of inspiration. Her story is not only about medals or rankings but about passion and resilience that shaped her journey. Let’s explore her life in detail through different dimensions of her skating career and personal development.

Early Life and Foundations of Jennifer Quanz

Jennifer Quanz developed an interest in ice skating at a young age, growing up during a period when figure skating was gaining significant global attention. Like many athletes, her journey began on local ice rinks where curiosity turned into commitment. Early exposure to skating helped her build fundamental balance, coordination, and artistic awareness.

Her training years were marked by discipline and repetition, essential qualities for any aspiring figure skater. Coaches recognized her potential early on, particularly her ability to combine technical precision with expressive movement. This balance would later become a defining feature of her skating identity.

Family support played a crucial role in her development, as competitive skating demands both emotional and financial investment. Her early life set the foundation for a career that would eventually place her among recognized names in American ice dance.

Will You Check This Article: Dream Cazzaniga: Meaning, Origins & Cultural Evolution

Jennifer Quanz and the Rise in Competitive Ice Dance

The competitive journey of Jennifer Quanz began as she transitioned from local training environments to national-level competitions. Ice dance, unlike singles skating, demands perfect synchronization with a partner, musical interpretation, and deep trust. She quickly adapted to these requirements and demonstrated strong potential.

During her rise, she participated in several key competitions that shaped her competitive identity. Each performance refined her technical skills and enhanced her artistic expression. Judges and audiences often noted her elegance and precision on the ice, which distinguished her from other emerging skaters.

This period of her life was also defined by intense training schedules, travel for competitions, and continuous refinement of routines. Her commitment to improvement highlighted the dedication required to succeed in elite figure skating.

Training Discipline and Artistic Development in Jennifer Quanz

One of the defining aspects of Jennifer Quanz’s career was her disciplined training approach. Figure skating requires rigorous daily practice, often including on-ice drills, off-ice conditioning, choreography sessions, and performance analysis. She embraced this lifestyle with consistency and determination.

Her artistic development was equally important. Ice dance is not just about athletic skill but also storytelling through movement. She worked closely with choreographers to create routines that reflected emotion, rhythm, and musical depth. This artistic focus helped her build a distinct presence on the ice.

Over time, she refined her ability to connect with audiences, transforming technical performances into expressive narratives. This balance of athletic and artistic elements became a hallmark of her skating style.

Competitive Highlights and Career Milestones

Throughout her skating journey, Jennifer Quanz achieved several notable milestones that reflected her growth as an athlete. Competing at national championships and international events, she gained recognition for her consistency and performance quality.

Her routines often showcased strong technical elements combined with fluid transitions, which are critical in ice dance scoring. Judges appreciated her precision in footwork and synchronization with her partner, which contributed to her competitive success.

These milestones were not just about rankings but also about personal achievement. Each competition represented years of preparation, sacrifice, and mental resilience. Her career highlights serve as a testament to her dedication to the sport.

Jennifer Quanz and the Evolution of Ice Dance Partnerships

A key element in ice dance is partnership, and Jennifer Quanz experienced firsthand the complexities and rewards of this dynamic. A successful ice dance team relies on trust, timing, and shared artistic vision. Her partnerships played a central role in shaping her competitive identity.

Working closely with a partner requires continuous communication and adaptation. From choreography synchronization to emotional expression, every detail must align perfectly. Jennifer Quanz demonstrated strong adaptability in maintaining harmony within her partnerships.

These collaborations allowed her to explore different skating styles and refine her performance techniques. The evolution of her partnerships contributed significantly to her growth as a professional ice dancer.

Challenges, Setbacks, and Resilience in Jennifer Quanz’s Journey

Like many athletes in competitive sports, Jennifer Quanz faced challenges that tested her resilience. Injuries, performance pressure, and intense competition schedules are common obstacles in figure skating. Navigating these challenges required mental strength and determination.

Setbacks in competition did not define her career but instead contributed to her development. Each difficulty became an opportunity to improve technique, endurance, and performance strategy. This mindset is essential for long-term success in sports.

Her resilience reflects the broader reality of athletic careers, where persistence often matters more than immediate success. Through challenges, she continued to refine her skills and maintain her commitment to ice dance.

Influence, Recognition, and Jennifer Quanz Legacy in Skating

The influence of Jennifer Quanz extends beyond her competitive results. She represents a generation of skaters who contributed to the artistic evolution of ice dance in the United States. Her performances inspired younger athletes and contributed to the sport’s growing popularity.

Recognition within the skating community often comes from consistency, style, and contribution to team dynamics. Jennifer Quanz earned respect for her dedication and artistic interpretation on ice, leaving a lasting impression on audiences and peers.

Her legacy is reflected in the continued appreciation of her performances and the inspiration she provides to aspiring skaters. She remains a symbol of discipline and artistic excellence in the figure skating world.

Conclusion

The journey of Jennifer Quanz in figure skating is a powerful example of dedication, artistry, and resilience. From early training days to competitive achievements, her story reflects the depth of commitment required in ice dance. She demonstrated how athletic performance and artistic expression can merge into a seamless experience on ice.

Jennifer Quanz continues to be remembered for her contribution to the sport, her disciplined approach, and her ability to inspire through performance. Her legacy remains meaningful in the figure skating community, reminding future athletes that success is built through passion, persistence, and teamwork.

Ultimately, Jennifer Quanz stands as a testament to what can be achieved through hard work and artistic vision, leaving behind a career that continues to inspire admiration and respect.

Read More: Cryptoboostly.co.uk